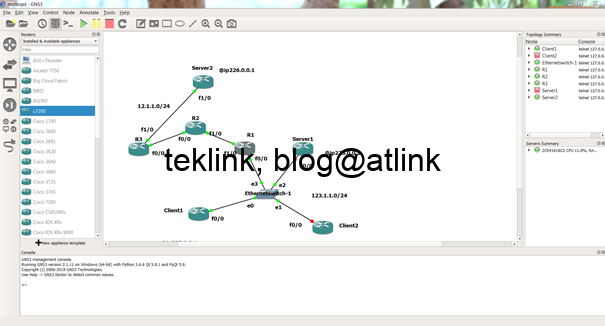

Server2 is 3 hops away from its receivers

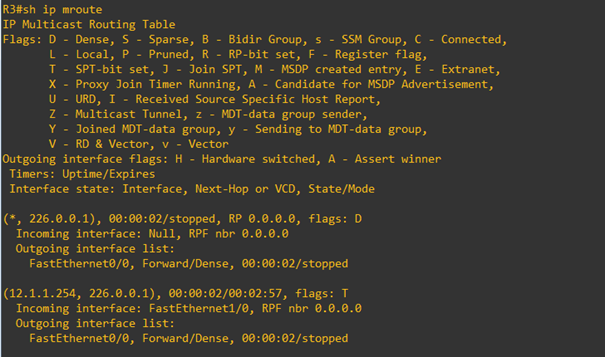

R3 receives the multicast and forwards it to its forwarding list interfaces

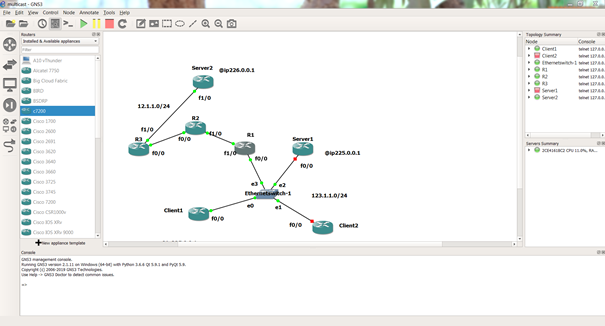

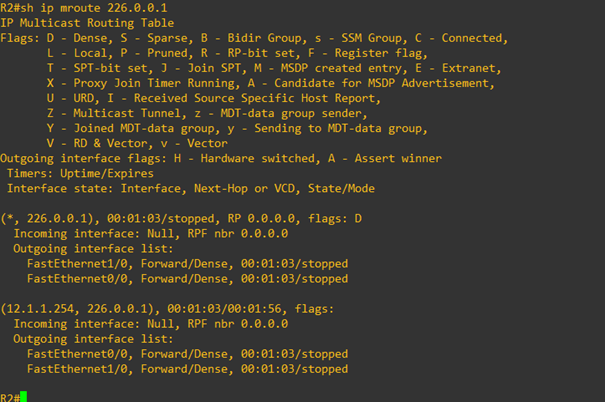

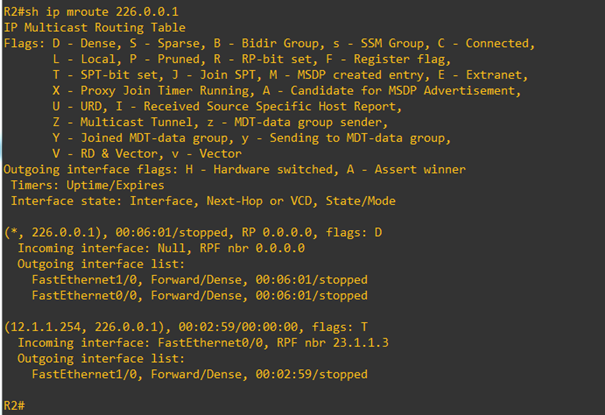

At R2

At R2 the outgoing interface list includes also the incoming interface

The incoming interface list is Null and the RPF neighbor indication is incorrect

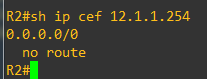

We check that no route exists at R2 to reach the source

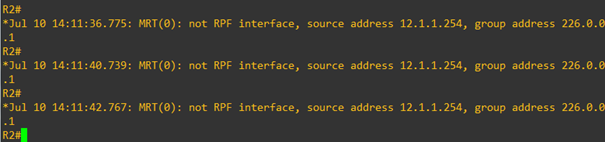

We activate a debug ip multicast routing, and check that RPF (Reverse Path Forwarding) fails

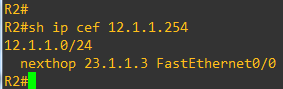

We configure a static route to Server2

Now the mroute table is populated with a correct information

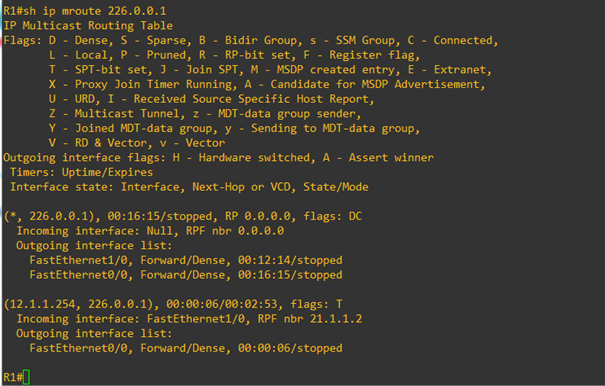

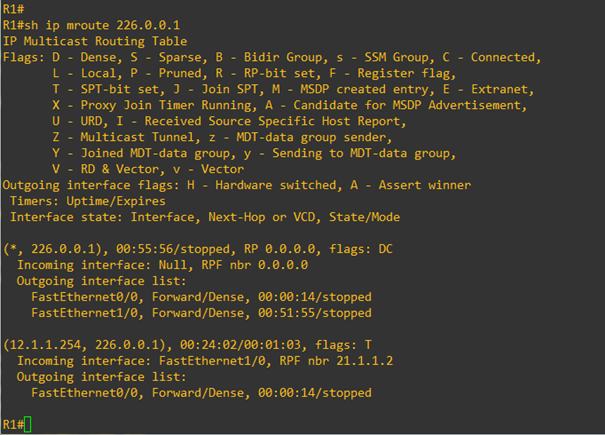

We do the same thing on R1 and check the mroute information about 226.0.0.1 multicast group

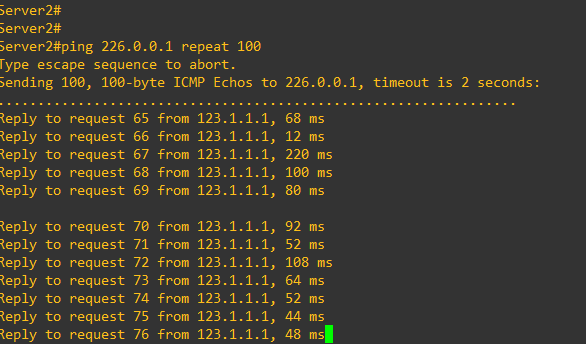

We check that Server1 is receiving now replies to its pings from Client1

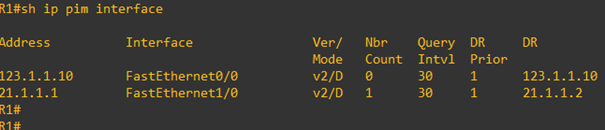

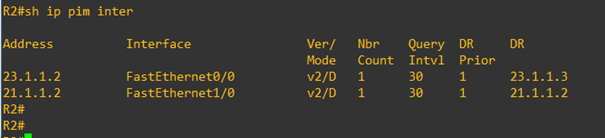

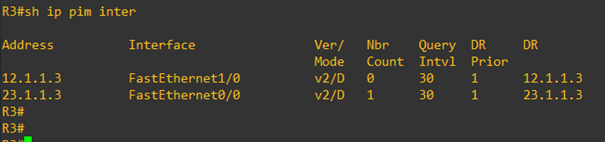

In this lab PIM is configured in dense mode

At R2

At R3

PIM is our multicast protocol that helps deliver multicast trafic in a network similar to our lab setup (where routing is necessary)

The dense variant of PIM floods the server trafic multicast domain wide (all routers and corresponding interfaces configured for PIM)

Let’s check the flood and prune behavior of PIM dense mode

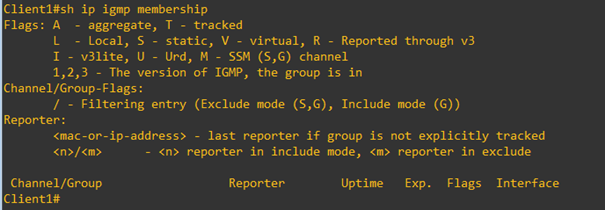

Client1 is no more interested in 226.0.0.1

How R1 would react?

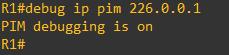

To see PIM into run we enable a debug ip pim

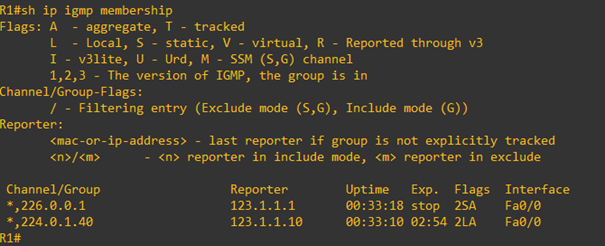

We check the IGMP active memebership

We notice the the 226.0.0.1 in IGMP table is flaged as S for static and A for aggregate

The field Exp. Which indicates the status of the tracking shows that Client1 has stopped joining the group

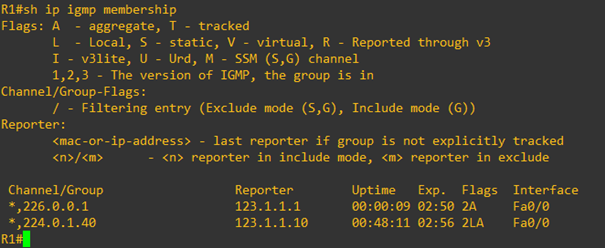

For Test we reconfigure Client1 to join the group and the result

The Exp. field downcounter is enabled again

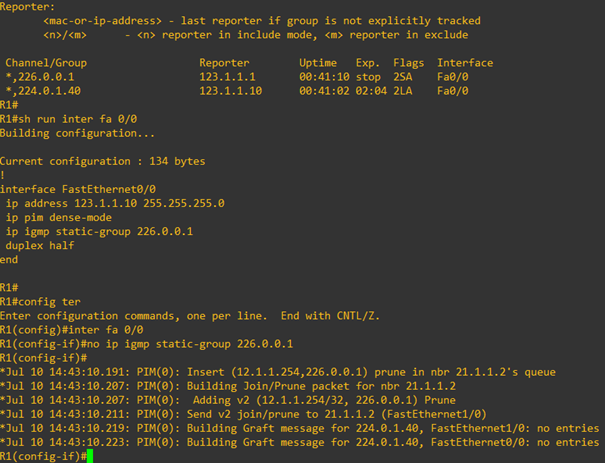

As soon as we stop IGMP (static or dynamic) memberships, R1 inserts a prune in RPF neighbor queue

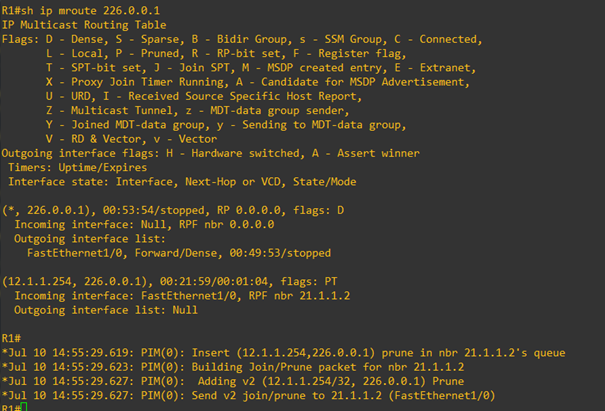

In the mroute table the entry is flagged as P, which indicates that it’s pruned

Additionnally the outgoing interface list is changed to Null

If Client1 joins again, R1 builds a message for its PIM RPF neighbor to ask for group multicast and populates the outgoing interface with the corresponding interface

To be continued…